Background

For decades, randomised controlled trials (RCTs) have been regarded as the gold standard for evaluating the efficacy and safety of healthcare interventions. Their strength lies in randomisation that , when properly implemented, minimises confounding and supports strong causal inference. However, RCTs are not without limitations. They are expensive, time-consuming, and often conducted in highly controlled environments that may not reflect routine clinical practice. Strict eligibility criteria, short follow-up periods, and protocol-driven care can limit generalisability.

Observational studies, by contrast, draw on data generated through routine healthcare delivery, registries, claims databases, and electronic health records. This allows them to capture broader and more diverse patient populations, longer follow-up, and outcomes that matter to patients, clinicians, and health systems. Observational designs are particularly valuable when randomisation is unethical or impractical, when studying rare diseases or long-term outcomes, and when assessing how interventions perform outside trial settings. In an era of personalised medicine and complex care pathways, these strengths have become increasingly important.

Recognition of the value of observational evidence has grown substantially among regulators and health technology assessment (HTA) bodies. Agencies such as the FDA, EMA, and NICE now explicitly acknowledge the role of real-world evidence (RWE) in regulatory decision-making, post-marketing surveillance, and reimbursement assessments. This shift reflects both pragmatic necessity and methodological progress. Advances in causal inference methods, data linkage, and computing power have improved the credibility of observational analyses, enabling researchers to address questions that RCTs alone cannot answer. The positive implication of this recognition is clear: observational studies are no longer seen merely as hypothesis-generating, but as a legitimate component of the evidence base, provided they are designed, analysed, and interpreted appropriately.

Despite this progress, it is crucial to recognise that not all observational studies are designed with the same inferential goals. Broadly, three modelling frameworks can be distinguished: causal inference, prediction, and association (or explanatory analysis). Causal inference frameworks aim to estimate the effect of an intervention or exposure on an outcome, under clearly defined assumptions. Prediction frameworks focus on forecasting outcomes for individuals or populations, prioritising accuracy over causal interpretation. Association based analyses, meanwhile, describe relationships between variables without attempting to infer causality, often for exploratory or explanatory purposes.

Although these frameworks are conceptually distinct, confusion between them is widespread in medical research. Association is frequently and incorrectly interpreted as causation, while predictive models are sometimes presented as evidence of treatment effect. This confusion has consequences, particularly when evidence is synthesised across studies. HTA agencies and regulators are primarily interested in the causal question: does a technology improve outcomes compared with an alternative? As a result, when observational evidence is used to inform decisions about effectiveness or cost-effectiveness, it is implicitly or explicitly expected to align with a causal inference framework. Failure to recognise this distinction risks undermining the usefulness of observational evidence.

Current Practice of Synthesising Observational Evidence

Current approaches to evidence synthesis involving observational studies are largely inherited from frameworks developed for RCTs. Systematic reviews are typically conducted and reported according to PRISMA guidelines, with study quality or credibility assessed using tools such as ROBINS-I, the Newcastle–Ottawa Scale, or design-specific checklists. These tools focus on domains such as confounding, selection bias, missing data, and outcome measurement, and they play an essential role in identifying threats to internal validity.

However, while these guidelines and tools are valuable, they share an important limitation: they rarely require reviewers to distinguish explicitly between the modelling frameworks used by the included observational studies. A study estimating a causal treatment effect may be assessed alongside a purely associative cohort analysis or a prognostic model, provided they address a similar population and outcome. From a risk-of-bias perspective, these studies are often treated as comparable, despite having fundamentally different inferential aims.

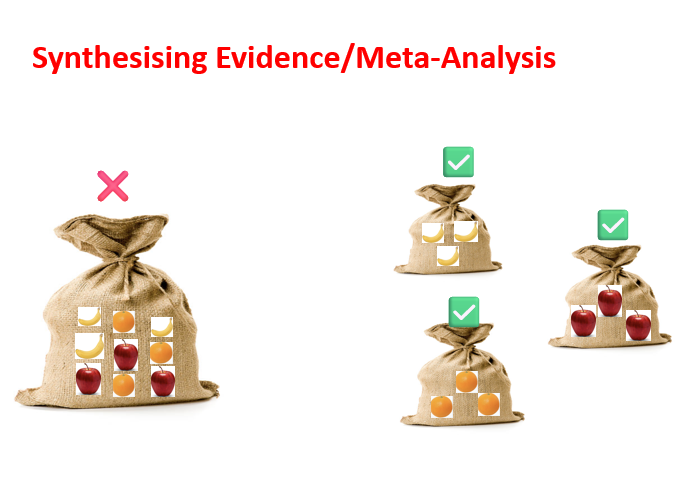

As a result, the usual practice is to synthesise observational evidence irrespective of whether individual studies were designed for causal inference, prediction, or association. This creates a serious interpretability problem. When evidence from mixed frameworks is combined, the resulting synthesis is neither a clear explanation of causal effects nor a neutral descriptive summary. Instead, it becomes an amalgam of heterogeneous analyses that cannot support robust conclusions.

Time for a Better Approach

If observational studies are to fulfil their promise in regulatory and HTA contexts, a more principled approach to evidence synthesis is needed. Central to this approach is ensuring that the inferential framework of included studies aligns with the objectives of the synthesis. Studies conducted under a causal inference framework should be synthesised separately from those aimed at prediction or association. This would allow reviewers to draw conclusions that are coherent, interpretable, and fit for purpose.

Such stratification would represent a significant improvement over current practice. Synthesising causal inference studies together would enable clearer statements about treatment effects. Similarly, synthesising associative or explanatory studies separately could provide valuable contextual or mechanistic insights without overstating causal claims. Importantly, this does not diminish the value of non-causal studies but rather ensures that each type of evidence is used appropriately.

To support this shift, existing guidelines and risk-of-bias tools will need to evolve. Updated guidance should encourage reviewers to identify the modelling framework at the study design stage and to apply assessment criteria that are consistent with that framework. This would improve transparency and reduce the risk of misleading syntheses.

Current policy and regulatory developments make this an especially timely moment for change. Regulatory bodies and HTA agencies are increasingly open to the use of real-world evidence in submissions, especially where RCT evidence is limited or unavailable. In this context, well-conducted syntheses of causal observational studies could play a meaningful role in supporting effectiveness estimates, informing economic models, and reducing decision uncertainty. However, this potential will only be realised if researchers are explicit and disciplined in how observational evidence is selected, assessed, and combined.

Concluding Remarks

Observational studies are no longer peripheral to evidence-based decision-making. Advances in data availability, methodology, and regulatory acceptance have positioned them as a critical complement to randomised trials. However, the way observational evidence is currently synthesised often falls short of its potential, largely due to insufficient attention to the inferential frameworks underpinning individual studies.

Treating all observational studies as methodologically interchangeable obscures important differences in purpose and interpretation. When causal, predictive, and associative analyses are combined indiscriminately, the resulting evidence is difficult to interpret and of limited value for decision-makers. This is not a failure of observational research itself, but of the frameworks used to synthesise it.

An approach that aligns evidence synthesis with the modelling framework of the included studies can offer a clear path forward. By synthesising causal inference studies separately and transparently, researchers can generate evidence that is both credible and decision-relevant. As HTA bodies and regulators continue to embrace real-world evidence, adopting such an approach is not merely desirable, but also essential.

References

- Sterne JAC, Hernán MA, Reeves BC, et al. ROBINS-I: a tool for assessing risk of bias in non-randomised studies of interventions. BMJ. 2016;355:i4919.

- Page MJ, McKenzie JE, Bossuyt PM, et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ. 2021;372:n71.

- GRADE Working Group. GRADE guidelines: rating the quality of evidence and the strength of recommendations. BMJ. 2004;328:1490.

- U.S. Food and Drug Administration. Framework for FDA’s Real-World Evidence Program. FDA; 2018.

- European Medicines Agency. Guideline on registry-based studies. EMA; 2021.

- National Institute for Health and Care Excellence (NICE). Real-world evidence framework. NICE; 2022.

- Concato J, Shah N, Horwitz RI. Randomized, controlled trials, observational studies, and the hierarchy of research designs. New England Journal of Medicine. 2000;342:1887–1892.

- Wells GA, Shea B, O’Connell D, Peterson J, Welch V, Losos M, Tugwell P. The Newcastle–Ottawa Scale (NOS) for assessing the quality of nonrandomised studies in meta-analyses. Ottawa Hospital Research Institute; 2011.