Target trial emulation (TTE) is a causal inference framework that explicitly defines the protocol of a hypothetical randomized trial—the “target trial”—and then uses observational data to emulate each of its components. These components typically include eligibility criteria, treatment strategies, assignment procedures, follow‑up period, outcomes, causal contrasts, and analysis plan. In doing so, TTE is said to borrow strengths from randomised clinical trial (RCTs), particularly their clarity of design, explicit temporal ordering, and focus on causal estimands rather than associations.

The method is commonly recommended to address well‑known biases in observational studies, including immortal time bias, selection bias, and confounding bias. By aligning time zero, treatment assignment, and eligibility criteria, TTE aims to prevent immortal time bias. By clearly defining inclusion criteria and follow‑up, it seeks to reduce selection bias. Confounding bias is addressed through design‑stage decisions and statistical adjustment methods applied after emulation of randomization.

However, the widespread recommendation of TTE often implicitly suggests that such biases are peculiar to RWE studies. This implication can be misleading. Immortal time bias, selection bias, and even confounding can and do occur in RCTs under certain circumstances.

For example, immortal time bias can arise in RCTs with delayed treatment initiation where patients must survive or remain event‑free to be randomised or to receive the intervention. Selection bias may occur through restrictive eligibility criteria, post‑randomisation exclusions, or differential loss to follow‑up. Confounding, while theoretically eliminated by randomisation, can re‑emerge due to chance imbalance in small trials, protocol deviations, non‑adherence, treatment crossover, or informative censoring.

In causal inference terms, the most noticeable difference between RCTs and Real World Evidence (RWE) studies lies in how they address confounding bias. RCTs rely on randomisation to balance confounders, whereas RWE studies must rely on analytical adjustment methods such as multivariable regression, propensity score matching, inverse probability weighting, or g‑methods. Beyond this distinction, both study types face similar structural challenges in defining populations, exposures, outcomes, and follow‑up. Consequently, framing TTE as a uniquely superior solution to bias risks overstating its advantages and underappreciating shared vulnerabilities across study designs.

Current “Disciplined” Approaches Addressing Biases in RWE Studies

Long before the rise of target trial emulation, RWE researchers developed a range of disciplined methodological approaches to identify, mitigate, and transparently report bias. These approaches are often tailored to specific bias mechanisms rather than relying on a single unifying framework.

Confounding bias is the most prominent concern in RWE. It arises when treatment assignment is correlated with prognostic factors. Standard approaches include covariate adjustment using regression models, propensity score methods (matching, stratification, weighting), and more advanced causal inference techniques such as marginal structural models and targeted maximum likelihood estimation.

Selection bias can occur when inclusion in the study or analysis depends on factors related to both exposure and outcome. In RWE, this is often addressed through careful cohort construction, use of incident‑user designs, explicit handling of left truncation, and weighting methods to account for differential selection or attrition.

Immortal time bias is mitigated by time‑dependent exposure definitions or new‑user designs that ensure proper alignment of exposure and follow‑up.

Information bias, including misclassification of exposure or outcome, is addressed through validation studies, use of high‑quality data sources, sensitivity analyses, and triangulation across multiple datasets. Missing data are handled using multiple imputation or inverse probability weighting under explicit assumptions.

These techniques pre‑date TTE and are widely implemented in pharmacoepidemiology. Importantly, these approaches are often accompanied by detailed protocols, pre‑specified analysis plans, and transparent reporting standards such as STROBE and RECORD. While TTE encourages protocol‑like thinking, it does not replace the need for these established tools. In practice, many high‑quality RWE studies already incorporate elements of TTE implicitly, without explicitly framing the analysis as emulation of a target trial.

Why TTE Studies May Not Be the Answer

Despite its conceptual appeal, target trial emulation may provide a false sense of security. RCTs themselves are widely regarded as the “gold standard” of evidence, yet decades of methodological research demonstrate that they are not immune to bias.

One risk is that TTE may install false assurances in the minds of researchers and readers, leading to overconfidence in results simply because a study is described as emulating a trial. The label may obscure unresolved issues such as confounding, data quality limitations, or violations of causal assumptions.

More critically, TTE studies may underutilise the disciplined, bias‑specific methods developed within RWE research. By focusing on trial‑like design elements, TTE risks privileging conceptual alignment with RCTs over pragmatic engagement with the realities of real‑world data. In this sense, TTE can be a less powerful tool than comprehensive RWE methodologies that explicitly diagnose and address each bias mechanism.

Visualising RWE in Terms of RCTs — and Beyond

A central tenet of target trial emulation is the visualisation of an RWE study in terms of an RCT design. This exercise can be valuable, but it also exposes fundamental differences between the two paradigms.

RCTs are inherently selective. Eligibility criteria are often strict, leading to study populations that are less diverse and more homogeneous than those observed in real‑world settings. As a result, the number and complexity of potential confounders in RCTs are typically fewer than in RWE studies. In contrast, RWE encompasses broader populations with greater heterogeneity. Although it is commonly assumed that randomisation balances participants across all potential confounders, the process by which confounding variables are identified and considered is rarely made explicit. Structured approaches for confounder selection, such as causal diagrams or directed acyclic graphs (DAGs), are seldom reported in RCT publications. Instead, it is often assumed that randomisation alone suffices, an assumption that may not hold.

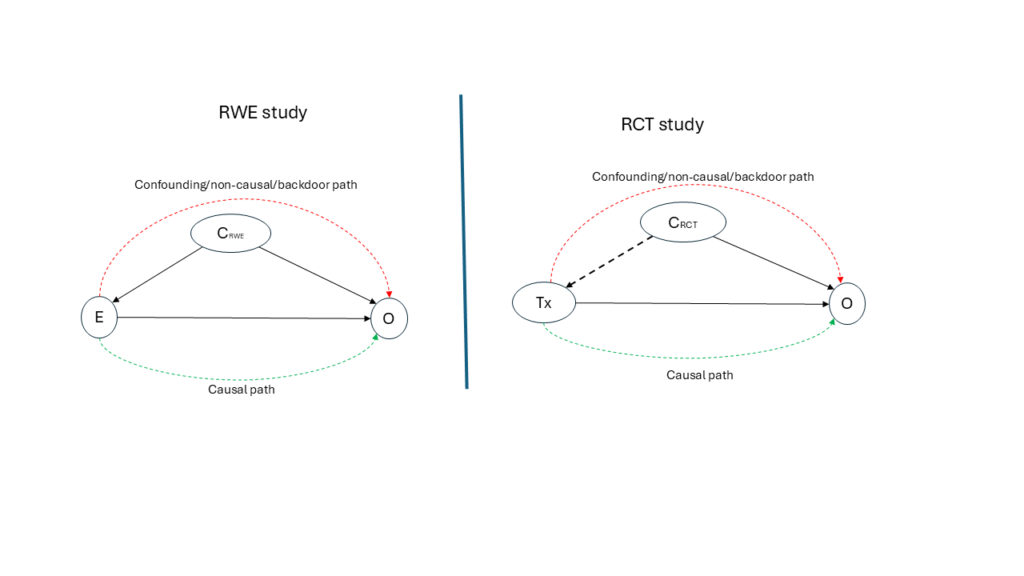

Consider a hypothetical study, with an RWE design on the left and an RCT design on the right in Figure 1 below. In the RWE DAG, a minimally sufficient set of confounders (CRWE) is explicitly identified and included in the analytical model, represented by solid arrows into both treatment and outcome. In the RCT DAG, a smaller or no set of variables (CRCT) is used primarily for randomisation, represented by broken arrows, and is typically not included in the outcome model. If we were to emulate RWE from the RCT, among other things, the CRWE and and CRCT set need to be equal and that the use of DAGs should be a routine process in RCT studies. However, CRCT is going to always be less than CRWE, even when Covariate-Adaptive randomisation or stratified randomisation is used, as DAGs are not an integral part of the RCT tools.

This contrast highlights that the key difference between RCTs and RWE is not the presence or absence of bias, but how confounding variables are utilised. RWE studies explicitly model confounding; RCTs implicitly rely on design features that may or may not function as intended in practice. From this perspective, one might reasonably ask whether RCTs should more actively emulate RWE practices—by adopting explicit causal modeling, transparent confounder selection, and DAG‑based reasoning—rather than the other way around.

This inversion leads to a provocative idea: instead of target trial emulation (TTE), perhaps we should consider real‑world evidence emulation (RWEE), where the strengths of RWE—breadth, realism, and explicit causal reasoning—inform the design, conduct, and reporting of trials.

Conclusion

The increasing availability of real‑world data (RWD) from electronic health records, registries, claims databases, and digital health platforms has intensified interest in methods that strengthen causal inference outside traditional RCTs. One such method is TTE, which proposes that real‑world evidence (RWE) studies should be explicitly designed to mimic a hypothetical RCT. While this approach has been widely promoted as a remedy for biases in observational research, its growing prominence warrants critical examination. This blog argues that although TTE provides a useful conceptual framework, it is not a panacea. Bias is not exclusive to RWE, RCTs are not immune to methodological flaws, and established “disciplined” approaches in RWE often address biases more directly and transparently than TTE. Ultimately, the question may not be whether RWE should emulate RCTs, but whether RCT thinking should more fully incorporate lessons from RWE.

Target trial emulation has contributed valuable clarity to the design of observational studies, encouraging researchers to think carefully about causal questions and study structure. However, it should not be viewed as a universal solution to bias in RWE, nor as a guarantee of trial‑like validity. Bias is a shared challenge across RCTs and RWE studies, differing more in management than in nature. A balanced approach—one that integrates the conceptual discipline of TTE with the rich methodological toolkit of RWE—offers a more robust path toward credible causal inference.

References

- Suissa S. Immortal time bias in pharmacoepidemiology. American Journal of Epidemiology. 2008;167(4):492–499. doi:10.1093/aje/kwm324.

- Hernán MA, Robins JM. Using Big Data to Emulate a Target Trial When a Randomized Trial Is Not Available. American Journal of Epidemiology. 2016;183(8):758–764. doi:10.1093/aje/kwv254.

- Hernán MA, Robins JM. Causal Inference: What If. Boca Raton, FL: Chapman & Hall/CRC; 2020.

- Rosenbaum PR, Rubin DB. The central role of the propensity score in observational studies for causal effects. Biometrika. 1983;70(1):41–55. doi:10.1093/biomet/70.1.41.

- Austin PC. An introduction to propensity score methods for reducing the effects of confounding in observational studies. Multivariate Behavioral Research. 2011;46(3):399–424. doi:10.1080/00273171.2011.568786.

- Robins JM, Hernán MA, Brumback B. Marginal structural models and causal inference in epidemiology. Epidemiology. 2000;11(5):550–560.

- Pearl J. Causality: Models, Reasoning, and Inference. 2nd ed. Cambridge: Cambridge University Press; 2009.

- Greenland S, Pearl J, Robins JM. Causal diagrams for epidemiologic research. Epidemiology. 1999;10(1):37–48.

- Schulz KF, Altman DG, Moher D; CONSORT Group. CONSORT 2010 Statement: Updated guidelines for reporting parallel group randomised trials. BMJ. 2010;340:c332.

- von Elm E, Altman DG, Egger M, Pocock SJ, Gøtzsche PC, Vandenbroucke JP; STROBE Initiative. The Strengthening the Reporting of Observational Studies in Epidemiology (STROBE) statement. PLoS Medicine. 2007;4(10):e296.

- Benchimol EI, Smeeth L, Guttmann A, Harron K, Moher D, Petersen I, et al. The REporting of studies Conducted using Observational Routinely-collected health Data (RECORD) statement. PLoS Medicine. 2015;12(10):e1001885.